Learning from random events

Randomness and uncertainty are poorly understood by most people, so here’s a moderately advanced puzzle to help.

This is a post about a math puzzle; readers beware.

Let’s say we have a random device, like a coin-flipping machine, that generates checks (✔︎) and crosses (✘) with some odds, not necessarily equal; say the probability of getting a check is p, between 0 and 1. Call this device the Mickey Bricks coin flipper.1

What we’d like to know is how to get an estimate for p from a few observations.

What should our estimate of p be after one check? Two checks? One check and one cross? Five checks in a row?

How to make your own Mickey Bricks coin flipper

One way to create such a random generator is to use a formula like

IF(random[0,1] < p) THEN "check" ELSE "cross"

Note that the p is a parameter here, a number between 0 and 1; it’s a parameter that we don’t know. It’s not uncertain itself (our lack of knowledge makes it uncertain to us) and it’s not itself a probability (using it in that formula makes the result, check or cross, probabilistic).

Since we don’t know p and from our viewpoint it comes with uncertainty, it’s called a random variable and its distribution depends on indirect information, namely the sequence of checks and crosses.

Now, back to our problem.

Learning from data

We can even be very au courant and call it machine learning.2

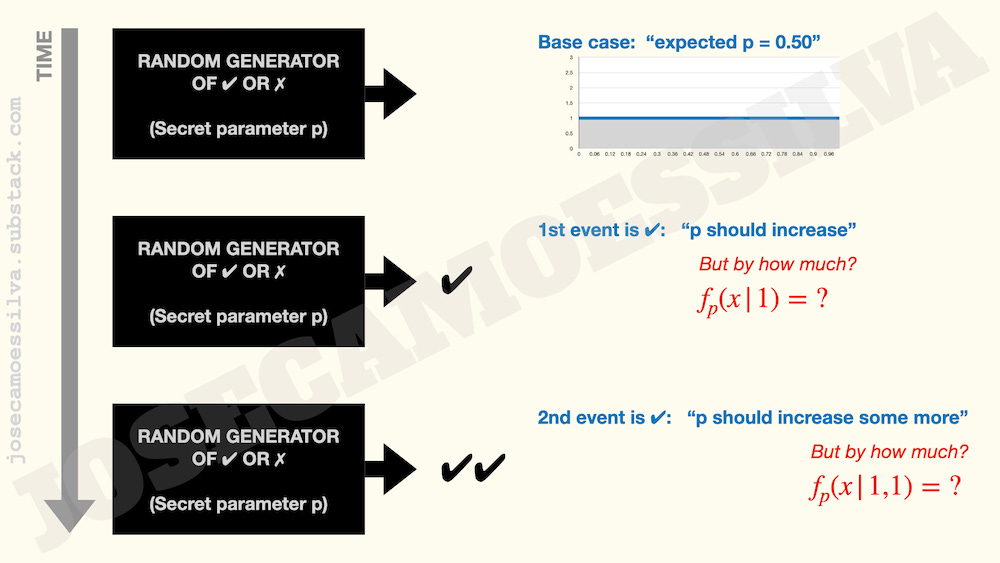

Before any events, we can hypothesize about values for p, or more accurately, about what we expect the probability of a check as the first event to be: since there’s no reason to expect that high values of p are more likely than low values of p, we might say that the “expected p” is 0.50.

But we should be very careful about what that means, because it doesn’t necessarily mean that we believe the true (unknown) p is 0.50; it just means that without further information we expect the random generator to be as likely to produce a check or a cross, which it would do if p was 0.50, or if p had to be 1.00 or 0.00 with 50% chance of either, or p could be any number between 0 and 1 with equal likelihood.

This matters because it’s very different to say “p can be any value between 0 and 1, with equal probability of taking any of those values” and “p = 0.50.”

So far so…, well, not-too-hard, but now we get our first observation and, as shown above, it’s a check. So we would think that this should “push” our guess about the values of p “upwards.” But, as the red question asks, by how much?

And how can we get a number from a single observation of a binary outcome (check vs cross)?! Even frequentists won’t say that observing a check makes p = 1.00!

Even when the first few observations are all checks, that doesn’t mean that p has to be 1.00, as can be seen in the following table:

As we can see, even a sequence of five checks in a row happens more than half of the time when p is 0.90, so a few checks in sequence don’t mean that p is 1.00. For shorter sequences, like two checks in a row, all it takes is for p to be over 0.75 for that to happen over half the time, as we can see on the table.3

Thinking in distributions

What we need is a distribution of the possible values of p, as a function of the observed events (checks and crosses).

The case with no information (called a prior distribution, because it’s before any event is observed) is easy: since there’s no reason to think any value of p is any more likely than any other, we choose a uniform distribution over [0,1]. That distribution has average 0.50, which gives us the “expected p” of 0.50.

Now that we have a distribution, we can use the informativeness of the events, because each point x in the support of that distribution has an associated probability of generating a check (that probability is x, itself, because that’s the number that is being hypothesized as possible p, at that point) or a cross (1-x).

(Note: the distribution is for p, a random variable, i.e. a number with uncertainty attached; each point we analyze is denoted x, a number, to be treated as a candidate for p during the analysis: “what would happen if the unknown p were really this number x?” The support of the distribution is the interval [0,1], all the values that p can theoretically take. The analysis works by letting x take each of these values in turn; since they’re continuous, this will mean calculus.)

So we have: a prior distribution, defining the state probability for the state (p = x), where x takes all values between 0 and 1, in turn; and a set of conditional distributions of the events on the states (p = x) for all values of x (if p = x then we observe check with probability x and cross with probability 1-x).

Thus we have all we need to apply Bayes’s rule to compute the probability distribution after integrating the information from each event.

Using Bayes’s rule, we get the distributions indicated in the above picture: the first check slants the distribution towards the upper end, the second check makes it even more high-end heavy (and nonlinear). If we compute the expected value of p from those distributions we get 0.50 for the prior, 0.67 after one check, and 0.75 after two checks. (Computations at the end; they use calculus!)

More data improves information

This raises an interesting question: what happens if we see a check followed by a cross? Our first reaction should be that the information in the cross somehow “reverses the gains” of the check, so that the expected p is back to 0.50.

But in fact, our information has increased: now we know, for example, that p = 1.00 isn’t possible. So, the distribution of probabilities for values of p has to change to reflect our new knowledge: while the expected probability of a check is now the same as when we had no information, the distribution of the values for p now must be middle-heavy (because values of p closer to 0 or 1 are less likely to produce one check and one cross than values of p closer to 0.50).

The following picture shows the distributions in detail (assuming the first event is a check), and they allow us to get an expected value for p (the average of the appropriate distribution).

So now we have the answer to what the “expected p” are; but we also have a much better sense of how information comes from data, because we have the distributions. For example, while the average after a check and a cross is the same as that of the prior, the red distribution contains much more information than the grey distribution.4

The following picture shows all distributions up to after the second event:

(The symmetry of the distributions for crosses and checks and the path-independence of the middle one in the third column serve as a validity check on the logic.)

So that’s the solution to this math puzzle: doing the math!

Math used in the post

Pictures, because there are no math affordances on this platform. Yet. (One hopes.) In what follows the checks are called “1” and the crosses are called “0.”

My book includes a Bayesian interlude, but doesn’t require calculus; get a free sample!

All math derivations were done by hand on the backs of grocery store receipts, so a nontrivial chance of error exists.

Mickey Bricks is the work name of Michael Stone, fictional inside man (and leader) of a team of London grifters, from the BBC show Hustle. Whenever there’s a decision to be made by coin flip during a con (usually to convince the mark to trust the grifters), the coin always comes out the way that works best for the con.

If we use a calculator: because we’re learning and using a machine. All kidding aside, Bayesian methods are common in machine learning; they are based on exactly what this post is about, but more complex.

Interestingly, many of the people who use Bayesian machine learning tools — even some who self-describe as “experts” — can’t solve the problem in this post when presented as an interview question (true story), which raises some questions about depth of understanding versus building programs by treating code libraries like Lego blocks.

Imprecise text but basically what this means is that if we repeated this infinite times, we’d get the results described in the paragraph. Lower numbers of repetitions (than infinity) wouldn’t be guaranteed, but would be close: if we had a million Mickey Bricks and each of them had a secret p = 0.75, we’d expect that more than half a million of them would have two checks in a row as their first two observations.

Really! Readers who prefer to ‘trust but verify’ should compute Shannon information for both to check. (And should get: 0 bit for the uniform and 0.18 bit for the red distribution.)