Much ado about the p-valoo!

In which we look at problems with some published results in almost-but-not-quite-STEM areas

A recent paper in PNAS has shown that 73 teams of social science researchers, given the same research question and data set, produced hundreds of models with contradictory, though sometimes highly confident, results.

We can learn something from that.

In the beginning was the data…

Let's all get on the same page regarding statistical testing.

There's some causal relationship we're interested in: how does action X affect metric Y? For example, action X is “watching the full series of Carl Sagan's Cosmos”; metric Y is “difference in number of correct answers in [some] science questionnaire Q before and after watching.”

Q is called a “measurement instrument,” a way to suggest that questionnaires are as well-founded and reliable as oscilloscopes or mass spectrometers. In our case, Q has 100 multiple-choice questions about basic science covering established physics, chemistry, biology, and geology facts.

Watching Cosmos has different individual effects: some people learn nothing, some learn wrong things (anti-learn), and some learn real things. The degree of learning (or anti-learning) is also different for different people.

Ideally, we'd like to know this effect for the whole population. We'd have everyone take the Q test, then watch the full series of Cosmos, then take the Q test again. We'd then have, for each individual, a measure of their personal learning from Cosmos.1

In reality we can't test every action on the entire population, so we need to use a sample for the measurement and then extrapolate to the population.

(I wrote a book about this and much more: get your free sample here. Sample, get it? You can read a Substack post about it here.)

Assuming we have a representative sample of 200 people, we run an experiment: the people, called here experimental subjects, answer the questionnaire, then watch Cosmos, then answer the questionnaire again. We then subtract the number of right answers in the first Q from the number in the second Q to get our metric.2

We now have a database with 200 numbers in it, and we want to make assertions about the 330 million US citizens. What powerful magic can help us achieve this?

A combination of four sorceries: statistics, probability theory, estimation, and testing. (For details see my book. The details are in the “statistics are weird” interlude and its technical notes.)

First, we compute the statistics (already in the figure above). The average is 1, which means nothing in the sample, as we can see; and the variance is 193, which means that there’s a lot of dispersion, as we can also see.

Now, comes the magic: deciding whether that average of 1 is “significant.”

… then, Abracadabra, significance!

Significant means “this result should be taken seriously.”

Really, that’s what it means, most of the time. If that average of 1 is significant, then we “know” (in some sense) that if the entire 330 million US population watched Cosmos, there would be 330 million more total correct answers in the post-watching questionnaires than in the pre-watching questionnaires.

Well, sort-of. (But not really.)

Let’s start with our data and figure out what it says about the population parameter.

The population parameter is the average we would get if we ran the experiment with the entire population. This is one fixed number, since there’s only one possible “sample” that includes the whole population; but that number is unknown. Because it’s unknown, we’ll treat it as a random variable, i.e. a variable that takes different values with different probabilities.3

Using two incantations from probability theory called Law of Large Numbers and [one of the] Central Limit Theorem[s], we can create a distribution for the population parameter (the population average). (See my book for details.)

In simple terms, the population average is more likely to be closer to the sample average than far from it; the variance of the population average is related to the sample variance and to the sample size; and the distribution is a Gaussian Normal.

Finding that distribution is the first big step: we’ve moved from the realm of what we know (the statistics, which are just a summary of the observations) to the realm of speculation. It’s useful speculation called estimation, but it’s done on faith; since mathematicians don’t like the word “faith,” they call it “assumptions.”

With that distribution, we can compute something called a confidence interval, which is related to significance and is easier to explain.

A given confidence interval, say the 95% confidence interval, is the interval of values for the possible population parameters, centered on the sample average, that has a total probability of 95%; that’s the non-shaded area in the distribution above, indicated by a blue arrow. (The boundaries of that interval are called “margins of error” in political polling and market research.)

As we can see from the blue arrow, 0 falls inside that confidence interval, which means that we can’t be 95% confident that the true population parameter isn’t 0. The simulation at the bottom shows the problem: with the distribution we have, there are far too many possible samples out there where this distribution, which is our best description of the population at this juncture, would give a sample average of 0 or smaller (the ones that fall to the left of the zero point).

The p-value is related to the confidence interval, but is defined in a different way: it’s the probability that we’d get the sample average we got if the true population parameter was 0. Numerically, if the 0 point in that distribution is just outside the (1–p) confidence interval, that p is the p-value.4

If that p-value is above a certain threshold given by The Gods (journal editors), that supposedly means that if we repeated our experiment many times, there would be too many results where the zero point was not in those shaded areas. For reasons lost in the sands of time, Druids have set that threshold at 0.0500 and it shall never be questioned!

As far as we’re concerned, a p-value lower than 0.05 means that The Gods (journal editors) believe that there would be enough cases in which the zero point falls in that shaded area; so the result is anointed as “publishable,” the highest known honor in the soft fields.

(In STEM the highest honor is “this is an accurate representation of reality and therefore engineers can use it to build stuff.”)

So, that’s what significant means: the coefficient for the variable is acceptable to the academic tribe as nonzero and the variable is deemed important for decision-making.

Now, how does it go wrong?

The miraculous data point that makes results significant

Obviously there are many ways for things to go wrong, and between blatant falsification and crass incompetence we could certainly find plenty of explanations.5

But there are some more subtle ways in which people get things wrong, and they may even lie to themselves that they’re following the right procedure.

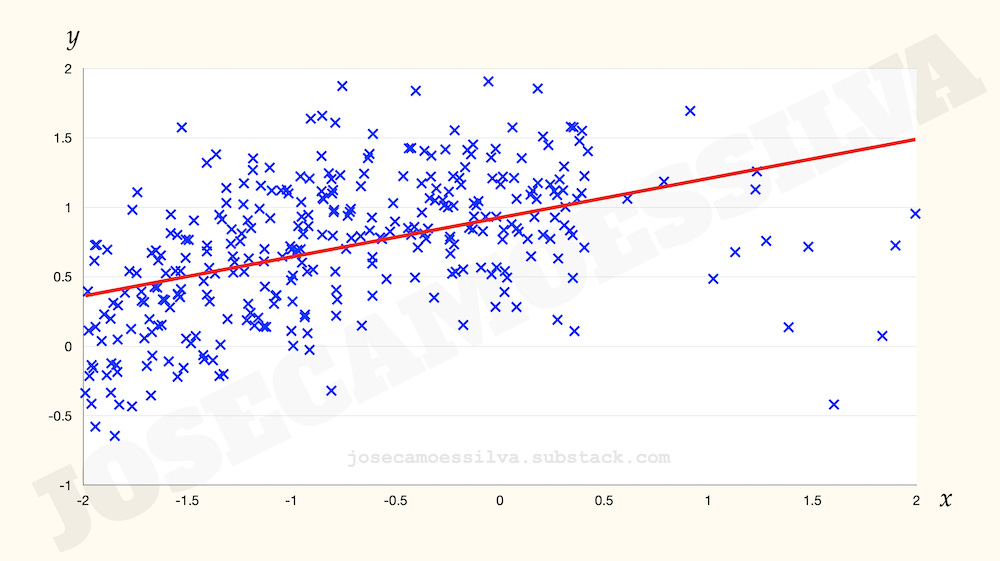

We’re going to use an example from my book, of very noisy data with a weak (but true) causal relationship between the x and the y.6

At first, the data set has 364 data points. Researcher Riley is very sad, because with 364 data points it appears there’s no effect; as journals are reluctant to publish “no effect” papers (which results in publication bias, something that is a problem in itself), there will be no paper after all this work of running a questionnaire in two large lecture sessions of an introductory psychology class.

But when all seems to be lost, a graduate student runs in with an extra questionnaire, one that had been left in the classroom by mistake. Two numbers are added to the data set, angels sing, and with 365 data points the result is now significant, publication is likely, and all is well with the world.

Now, that data point doesn’t really seem to be all that special; it’s right there in the middle of the blob, so how can it be that influential?!

It really isn’t. Its influence is a pure artifact of the arbitrary choice of 0.05 as the magical Gods-anointed threshold for significance: the p-value with 364 points is 0.0517 (Unworthy!) and the p-value with 265 points is 0.0497 (Worthy!), a negligible change in numeric value that is elevated to truth-defining by the artificial threshold.

We might be tempted to think that more data is better and that’s partly what’s happening there, but here’s the rub: data point 366 reverses this important discovery back to the realm of “x doesn’t influence y”:

A second graduate student arrives next, bringing yet another questionnaire. Another two numbers are added to the data, and —everyone holds their breath— cataclysm and damnation! The result is no longer significant!

The graduate student who brought this questionnaire might suddenly realize that a questionnaire that was left in the classroom might have been tampered with and is therefore unreliable. Lo and behold, the result is significant again. N = 365 was the right number all along, and that is the data that will be used for publication.

And no one will believe in their heart of hearts that they did anything wrong.

Models are powerful tools: handle with care

Significance is one thing, but how can different teams find models that have significant but contradictory relationships?

Let’s say we have the following data relating the normalized intelligence of an audience member (x) to their liking of the nightly news (y), in some reaction scale:

A naïf market researcher would conclude there’s no effect, as naïf researchers are enamored of linear models (or aren’t even aware that the tools they use are linear-model-based) and therefore will find no significant relationship:

A less-naïf market researcher (or one that learned to plot data prior to statistical analysis) might find that there’s a nonlinear relationship, in fact a non-monotonic relationship: the farther (in either direction) an audience member is from average intelligence, the less they enjoy the nightly news. This might be due to many factors that cannot be determined from this data alone.

Enter three self-described scientists, Larry, Curly, and Moe, exalted professors of the respectable —so all those in the field say— field of Newsology. Not being mere market researchers, Larry, Curly, and Moe don’t care much for the results above.

Larry, founder of the Newsology school known as Positivologistics knows that the relationship between intelligence and enjoyment of the news is clearly positive. Therefore a graduate student at the Larry Institute for Positivologistic Analysis is given the data set and told to analyze it “freely.”

Being smart enough to be a graduate student means said academic serf knows very well which model results will be conducive to grant renewals and future letters of recommendation and which model results will lead to job termination.

“The latest preprint from the Larry Institute for Positivologistic Analysis clearly shows that there’s a positive relationship between intelligence and enjoyment of the nightly news, after careful analysis and data cleaning to remove anomalous data:”

Curly, endowed chair of Neutralistism (positing that there’s no relationship between intelligence and enjoyment of nightly news) at the University of St. Barthélemy, also has graduate students, which means he too can prove that Neutralistism is correct by careful analysis of the data:

(The graduate student did try for a non-linear model, but by enormous coincidence —and a felicitous coincidence for the graduate student that was indeed, as finding otherwise would have been career-ending— the data did not support non-linearity.)

Moe, Dean of the Faculty of Newsology of Tunica University, a well-known Negativistologist, using a post-doc instead of a graduate student as his elevated status demands, confirms that the data fully supports the Negativistology School law that increasing intelligence decreases appreciation of the nightly news:

Summarizing the above:

And this is how, in the absence of outside validation by —say— engineering, these softer fields find themselves with models that contradict each other and with schools of thought that give contradictory predictions for the one reality (which, for some reason, they never really seem to test with a final winner-take-all test, unlike —say— physics).

So, what’s different about STEM, then?

A STEM alegory

If we sent 73 teams of physicists, electrical engineers, and electronics engineers a data set of observations linking the frequency and amplitude of voltage and current in a RC-series circuit, not only would the results be consistent in model form for everyone (i.e. they would discover the impedance formula and it would be the same formula for everyone) but also they'd all have the same estimates for the values of R and C.7

This “experiment” is done in high-school electronics vocational classes and college labs for introductory electromagnetism. All students had better get the same results if they want to pass the class. Those results also match previous students' results, textbooks, and —of course— reality.

Reality. That’s the STEM difference.

If electromagnetism was like the softer fields, the electrical engineers and the electronics engineers would have different model forms (and obviously different estimates for R and C), but generally say that current increased with frequency for a given voltage amplitude.

The physicists would be divided into four camps: two would find no significant effect of frequency on anything, though there would be weak evidence of either increasing or decreasing current as a function of frequency; one would find a significant negative effect of frequency on current; and the last group would argue about the validity of asking the question to hide the fact that they had no idea how to analyze data.8

Lucky for us, electromagnetism is a STEM field. Reality rules there.

There would be no need to do anything else; this is important and most people don't understand that: they think that if we have all the information, summary statistics will tell us something more, which is impossible by definition of “all the information.”

And in fact, there would be no decision to be informed by this information, because everyone would have already watched Cosmos and it's not like we could have them unwatch Cosmos if it turned out to have been a bad idea.

More careful researchers would probably code the answers separate by question instead of just the number of right and wrong, which would allow these researchers to measure the effects of Cosmos on each of the questions, but we'll keep things simple.

For example, before we throw a die the result of that throw (which hasn’t happened yet and is therefore unknown) is a random variable that can take the values 1 through 6 with 1/6 probability each. The set {1,2,3,4,5,6} of possible values is called the support for that random variable and the function that assigns each of these numbers a probability is called the probability mass function, which appears on the y axis of probability distribution diagrams and frequency histograms. (In cases where the random variable can take continuous values, like our case, it’s called the probability density function.)

The derivation of p-values is completely different and requires understanding of more technical material. There are also a lot of other assumptions to make the above paragraph true, including what kind of test we’re referring to.

Ironically, some people who are incompetent and therefore can’t get results legitimately, choosing instead to falsify them, get caught cheating because they aren’t competent enough to falsify data in a convincing way. For comparison, all data above is simulated, which means “it’s made up but we’re telling you that up front,” but is realistic to the point where it wouldn’t be detectable by the techniques that catch the incompetent falsifiers.

The technical notes for this chapter in the book include R code to generate our own p-hacking examples. Hours of fun for people who like those things. The relevant book chapter is Pitfall 8: Interpreting model results. The book has a longer set-up and deeper and more detailed explanation but the argument there is the same as here.

As an intellectual curiosity, this estimation can be done by Ordinary Least Squares (called “linear regression” by many people). Many non-linear functions can be estimated with OLS if they can be linearized up to a change of variable, like so:

(There are many functions, a very large infinity number of them in fact, that cannot be estimated by OLS. But many that people think belong in that category… don’t.)

Based on my fading memory of an online MIT Physics lecture where the professor compared the predictive ability of Physics with the non-predicting “schools” in Macroeconomics, partly to mock economists (who are paid more than physicists) and partly to show the difference between fields that have the motions and forms of a science and fields that have the predictive abilities that define real science.