One sure million or 50% chance of 50 million?

There's more to decisions than decision theory; though judging by the discussions of this puzzle on twitter, one might want to start teaching that as a first step.

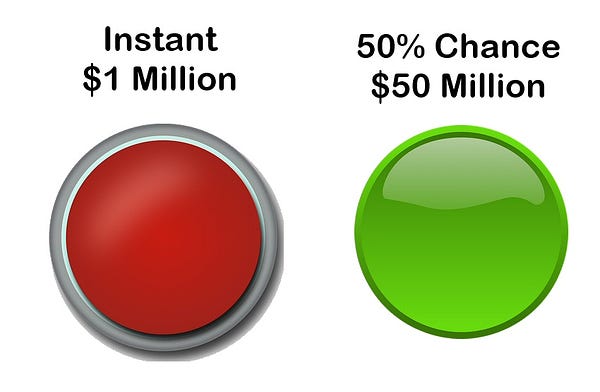

Cliff Pickover, math popularizer and prolific author, posted a variation on an old problem to Twitter this week:

Discussion ensued, with the composure, coherence, and cognizance one expects on Twitter when something grazes the topic of finance or financial decision-making.

This is an old problem (so old its solution comes from Daniel Bernoulli!), though usually the risky payoff is smaller; typically introductory lectures in decision science, economics, or finance classes use a variation where the high payoff is 5 or 10 million, mostly because it makes graphs easier to draw.1

Okay, let’s do the decision-science 101 thing…

As many people have mentioned on Twitter (and since this is a variation of an old problem, pretty much everywhere else where mentions are mentioned by mentioners), the decision has no right or wrong answer. It depends on the risk profile of the person making the choice (whom we'll call Decider) and that depends, among other things, on how much wealth that person already has.

The following is a simple illustration mapping a payoff to the happiness Decider receives from the payoff under two different conditions: a “poor” Decider, for whom a sure gain of 1 million dollars is a life-changer and the additional expected 24 million aren't worth the risk; and a “rich” Decider, who may forfeit the sure gain of 1 million in order to have a shot at 50.

These happiness functions (we could call them utility functions, but only if we wanted to have long pointless discussions about nomenclature with economists, and who has the time?) contain both elements of the problem archetype (the risk profile and the wealth effect).

Eating the fruit of the tree of strategic thinking

The problem what-hype?

The problem as presented and interpreted by mathematicians and people who aspire to think like mathematicians is an archetype (a core model, a pure logical instance), not a real decision problem. Not just because the payoffs are hypothetical (that makes it an hypothetical decision), but because there's an implicit assumption that everything in the problem is understood by all parties in the way that it's understood by the mathematician.

People who think like people, not mathematicians, may realize that this puzzle — when made real — isn't a simple decision by the Decider; it's a strategic situation, with two players: the Decider and the Proposer (the one making the offer of two buttons and then making the payment). And the behavior of the Proposer has unobservable characteristics (the random draw… but is it really random?) and is driven by the Proposer's incentives.

Let's consider the situation from the viewpoint of Decider: "If I choose green, Proposer may always pay 0, because how would I know whether that's a deliberate 0 or the outcome from a random draw? Better to not risk that and take the million."

Huh-oh, there go all those nice happiness-function-derived decisions.2

Trust no one!

How to solve the trust problem with what computer science calls a zero-knowledge proof (a way to validate the random draw that doesn’t require additional information or a trusted third party)?

Here’s a possible solution, a procedure created by libertarian scifi author Travis Corcoran on Twitter, slightly altered by your humble host:

1. Proposer shows Decider a set of balls numbered 1-100, puts them in an opaque bag, and doesn't touch the bag again.

2. Proposer uses a 50-50 random device (flips a coin) 100 times, registering the outcome (pay $50MM, don't pay). This may have to be adjusted with additional coin flips until the distribution of the outcomes in the registry is precisely 50/50, possibly by treating the registry as a FIFO register. The registry is set aside.

3. Decider chooses whether to take the sure $1MM (RED) or take the risk (GREEN).

4. If Decider chooses GREEN, Decider pulls one ball from the bag, both look up the outcome of that number in the register of flipped coins, and that outcome determines how much Decider is paid.

Why do the draws first and register them? So that the Proposer can’t change the outcome of the random draw depending on the Decider’s choice, and the Decider can trust that half of the outcomes in the registry are “pay $50MM.”

Why this handling of the bag and balls? To have a random device for 1-100 that can't have its outcome predetermined by either the Decider or the Proposer.3 With one player providing the balls and the other player picking, even if Proposer marked one of the balls in some way (heavier, for example), there would be no incentive for the Decider to pick it.

Why the 100 draws? Because with 100 draws, we expect the distribution of coin flips to have a number of “pay $50MM” somewhere near 50, and that validates the probability distribution of the device (but technically, not the randomness); the actual distribution, Binomial(100,0.5), looks like this:

Which is why we need to adjust the registry to make sure it's 50-50.4

One nit to pick with Pickover (it's a common issue with “look how fun math is” popularization): the problem here is not a math problem, it's economics or decision theory; math is a tool used by the sciences (and the social sciences who aspire to sciencitude), but the knowledge used to solve the problem, the semantics to the mathematics syntax, is decision theory.

This is one of the common problems with some examples of over-discounting or hyperbolic discounting such as “get $10 now, in the morning, or $20 in the afternoon.” Not only is it inconvenient to come back for the extra $10, there’s also some risk that either the experimenter will not be there or that the experimental subject is otherwise occupied.

Easily, that is. A little misdirection and voilá, no more randomness (Proposer replaces both the bag and the registry with all the balls in the bag pointing to “no pay” entries in the pre-filled registry).

We may think, reasonably, that this is a cumbersome procedure for a mathematical recreation tweet, and it is; but without the cumbersome procedure there are many cases in which what appear to be simple decisions can be reversed (and reversed in a way that paired decisions may look like inconsistent reasoning) simply because there are elements of the actual execution of the problem as a real situation that aren’t being taken into account.