Quantitative thinking or numbers as props?

Once more into the breach, in the never-ending fight against misuse of numbers.

Back when people under 90 still watched television, Bill “I'll do it live” O'Reilly had one of his most memorable TV moments when he asked the president of the American Atheists about tides. Paraphrasing for space,

Bill O'Reilly: Tide comes in, tide goes out; you can't explain that.

Atheist Maximus: Maybe it's Ba'al! Because that is as likely as your God.

The entire science/skepticism/atheism social media mocked O'Reilly for his ignorance of grade-school science, and he deserved it; but all these online eAtheists, eSkeptics, and eScientists seem to have missed an important point:

Atheist Maximus didn't know what causes tides either.

Because if he did, he would have cut into O'Reilly’s rant with “it's the gravity of the Moon.” Carl Sagan most likely would have. Atheist Maximus didn’t know the science, or at least had no fluency in its use. “Because science,” to many eAtheists, eSkeptics, and eScientists is — ironically — an incantation.

They “love science” as long as they don’t have to learn any.

I was reminded of that story when I noticed a parallel phenomenon: many people use numbers to support words-arguments but don't think quantitatively. To them, numbers are props, not inputs to computation.

They “love numbers” as long as they don’t have to do any computation.

Numbers as props

Here's an example of using numbers as props: the size of the California economy.

It's my figure and it was made for twitter, mostly to tell people in the US that my state is better than theirs. 😉 Yes, it's not a serious thing, but it looks like a quantitative slide of the kind you see in many words-argument presentations.

Look at all those numbers! On a table with alternating color backgrounds, to make it easier to read! It even lists the sources for the data! And the precision in the measurement of those GDPs!1

Clearly a strong quantitative argument for the superiority of California! 😉

And yet, after getting data from the World Bank and Statista, all I did was sort the list. The mental work was mostly in graphic design.2

To many people that figure looks like a quantitative argument, because there are numbers on it; a table of them, for ease of comparison. But numbers alone don’t make an argument quantitative: it’s what you do with the numbers that matters.

Let’s remember that the next time we see a slide like that in a presentation.

Now let us look at a longer example: two ways to respond to a quantitative statement, paraphrasing a recent interaction online.

Looks quantitative; but no computation…

A dialogue concerning the two chief systems of thinking.

This is known to all participants: the population of interest is half million bodybuilders whose one-rep maximum in the military press is distributed normally with mean 100 kg and standard deviation 20 kg.3

Simplicio states that there are no bodybuilders who can military press 150 kg or more.

Sagredo, responding to this statement, writes a very long post that refers to many different sources and studies and shows many charts and numbers. But doesn't do the following computation: what number of bodybuilders with a military press of at least 150 kg does the distribution predict?

Salviati takes Simplicio's statement, which estimates (at zero) the number of bodybuilders who can military press 150 kg or more, and computes the number that the distribution predicts: 3,105.

Had Salviati written Sagredo's post, he would have started with that number, because that's the key quantitative insight that we get from having a distribution. Then perhaps he'd go over the many studies and other sources, though more likely not.

Just like Carl Sagan would only need to tell O'Reilly “it's the gravity of the Moon,” in quantitative fields the computation makes the point. That, which many people don't understand, is the true power of quantitative thinking: computation.

The first instinct of a true quantitative thinker is to compute, not argue.

Showing numbers and charts without computation isn't quantitative thinking. Alas, in some fields, showing numbers and charts without computation is what passes for authoritative, because of the appearance — though not the reality — of quantitative thinking.

So let us look at quantitative thinking: the good, the bad, and the ugly.

The good: Paul Graham does the math

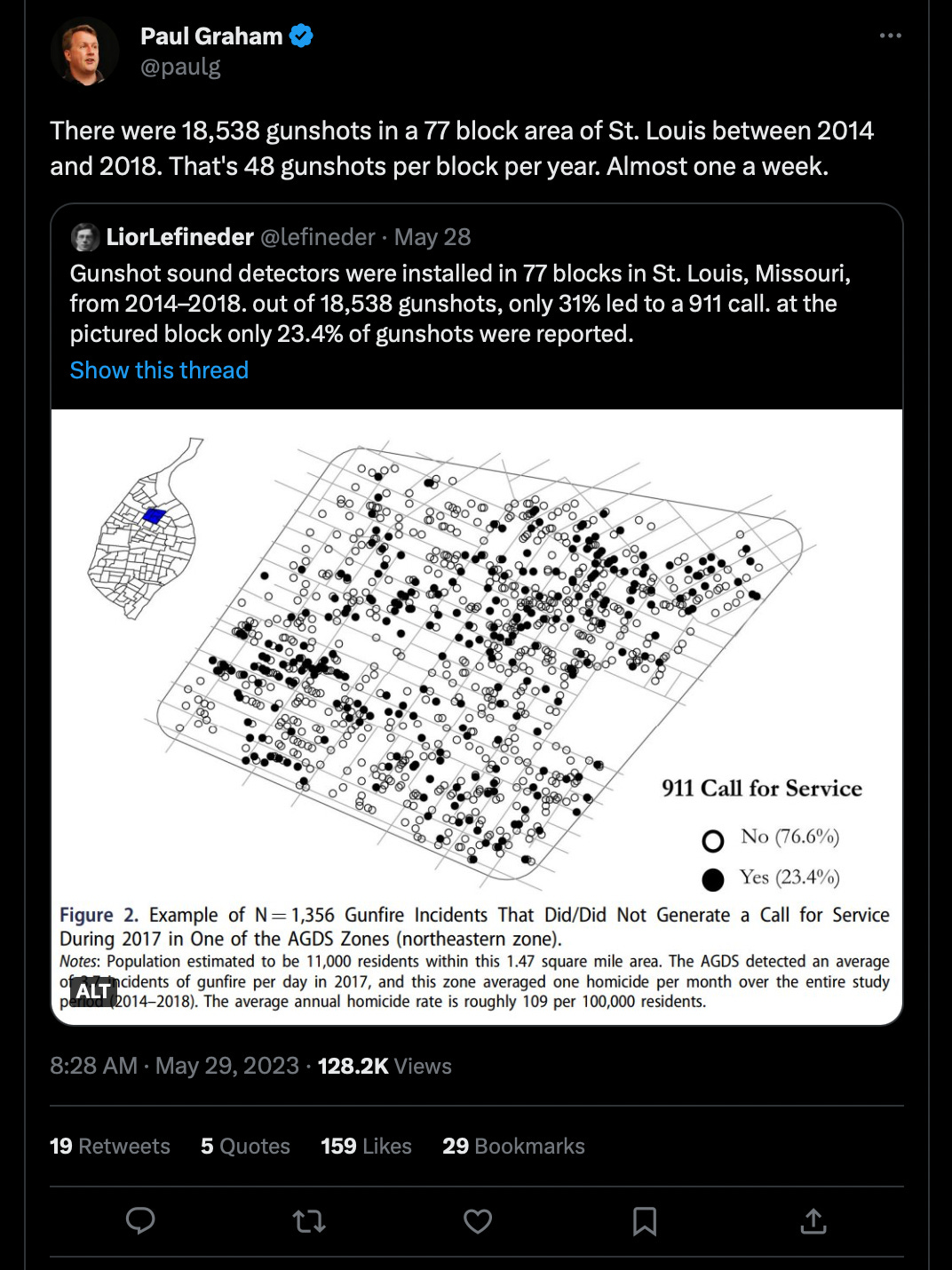

For an example of good quantitative thinking, and to show that it doesn’t need to be complicated, let us look at this tweet by Paul Graham of yCombinator, also a purveyor of nerdy essays about nerd life (and here):

https://twitter.com/paulg/status/1663205740696289280

By taking the two numbers in the data and using minimal computation, Graham concludes that the average gunshot per block is almost one per week. That’s a good start. We can go beyond this, by using a little more technical knowledge.

Assuming that the gunshots are independent events (possibly not a good assumption in reality, but it’s a starting point), we can take that 48 gunshots per year per block and find the distribution of gunshots per week: what percentage of weeks without any gunshots in a particular block; what percentage with one gunshot; etc.

When events are independent and potentially unlimited, the count of events follows a Poisson distribution, with its average equal to the observed, or “empirical” if we want to be fancy, average. The distribution is on the bottom right in the figure below.

An interesting observation: naively we might expect that since the average number of gunshots in a given block is close to one per week, there would be shots in almost all weeks; as we can see from the distribution, almost 40% of the weeks have no gunshots (for a given block, and assuming independence of events).

This is a common misperception of randomness as equally distributed: if the gunshots were approximately uniformly distributed over all weeks, then they couldn’t be independent events, since having one gunshot in a given week would preclude other gunshots in the same week, in other words they wouldn’t be independent.

Also, the probability of a week with more than one gunshot per day in a single block is vanishingly small: 0.00005864% or 1 in 1,705,463. Checking that prediction against the actual data might be interesting, as a validity check on our assumptions.

(Note that as said above, this analysis assumes gunshots are independent events, which anyone who watches police procedurals, or the Numb3rs episode “the OG,” episode 12 of season 2, will know is not a very realistic assumption.)

The Bad: Using contradictory numbers as mutual support

Let’s say we have a DC electric line and two high-precision voltmeters. When we use voltmeter A it tells us that the line voltage is 67±0.0001 V; when we use voltmeter B, it tells us the line voltage is 85±0.0001 V.

Can we conclude that the line voltage is lower than 100 V from these measurements?

No. The only thing these results tell us is that the voltmeters aren’t working properly: voltmeter A’s result invalidates voltmeter B’s result and vice versa. If each of them invalidates the other, there’s no reason to believe that anything they read is accurate. The voltage could be any value, because we can’t trust the voltmeters at all.

This is well understood in the hard fields (science and engineering), which is why calibrating and validating testing equipment is done on a regular basis.

Okay, this seems like a wasted example for something obvious, so it might surprise some of us to learn that it’s a common practice in some fields to take contradictory numbers and use them as corroborating each other.

(Readers of my book will remember that there’s a short chapter early on that makes this point regarding meta-analyses in fitness “science.” Get a free sample here, though the short chapter isn’t in the sample.)

A number of people who compare grip strength of different populations used some indirect data to estimate Minbari grip strength. Two studies of 1250 persons each put that grip strength average at 67 and 85 kg, with standard deviation 15 kg.4

Some people within the field of grip strength studies use those numbers to say that average Minbari grip strength is definitely lower than 100 kg. But this is the voltmeter problem again.

With a sample size of 1250 and SD = 15, the standard error is 0.4244, which means that even for 99.9% confidence those two averages are significantly (may Bayes forgive us) different. In fact, they are significantly (may Bayes forgive us) different at a confidence level of 99.9999% and even 99.9999999%, i.e. one in a billion against.5

To illustrate the problem beyond the voltmeter equivalence, we can see that these distributions make different predictions about the fraction of the population that can do a task which requires a minimum grip level.

Let’s say that the minimum force needed to use a hand riveter is 85 kg. Then one result says that only 11.5% of Minbari can use a hand riveter while the other says 50% of Minbari can.

These two distributions predict wildly different levels of population engagement in the industries that rely on the use of hand riveters, and therefore on the level of Minbari economic development, as the Third Fastening Revolution involves an economy of rivets replacing an economy of knots.

In sum, these results make vastly different macroeconomic predictions about observable variables.

And yet, many in the grip strength studies field are happy to take these numbers, which contradict each other and therefore say nothing more than “the other number is wrong,” as evidence that Minbari have grip strength below 100 kg.

The more charitable interpretation of this is that people in these fields learn their “methods” by rote with no deeper understanding of what significance (may Bayes forgive us) means; worse, without understanding that two significantly (may Bayes forgive us) different numbers for one quantity can’t be used as evidence for anything about that quantity, because each is prima facie evidence that the other is wrong.6

That’s failing to understand what measuring a quantity means.

The Ugly: Tall tales about long tails

Given my vow to no longer engage with Tassim Ticholas Naleb’s nonsense, this section has been deleted. (Name cleverly disguised.)

Interested parties can read a version of it in the technical notes to Pitfall 9 in my book, with the warning that it’s one of the few parts of the book that requires some algebra. Free book sample here, but it doesn’t include the technical notes (no point in scaring potential readers…).

Old post, not as complete, clear, or well-illustrated as the section in the book; for people who want to learn more but don’t want to spend pi dollars.

All countries report their GDPs to the nearest ten- or hundred-thousand local currency; the “precise” numbers come from converting local currencies to USD.

Graphic design is a skill that needs practice and is actually useful in communicating with humans, so I took the opportunity to practice it. Background photo from here.

This is a modified version of a real interaction where the topic wasn’t physical strength; the numbers are changed as well, but otherwise an accurate description of the process.

This is based on real studies for a real variable and a real population, in a field that many people take to be serious and relevant to understand important phenomena in the real world. The studies aren’t about grip strength, but the actual variable doesn't matter to the argument here and grip strength is less emotional than the variable studied. The numbers themselves are unchanged from the real studies.

The 99.9999999% confidence intervals (may Bayes forgive us) for the averages of the two studies are [64.407, 69.593] and [82.407, 87.593]. The critical value for a 99.9999999% two-sided test (may Bayes forgive us) is 6.109 and for a one-sided test (may Bayes forgive us) is 5.998, both comparable with a t-statistic (may Bayes forgive us) of 42.409.

As with the voltmeters, the solution here is to abandon these measurements, get a measurement instrument that has been validated and calibrated, and get a third — valid — measurement. But until that’s done, the prudent strategy is to assume both measurements are wrong. Not “imprecise,” not “biased,” wrong: data unusable, discard.